Open-source LLM tracing that catches what dashboards can't

Your agent returned a confident wrong answer. The error rate stayed at zero. Breadcrumb catches these issues before your users do.

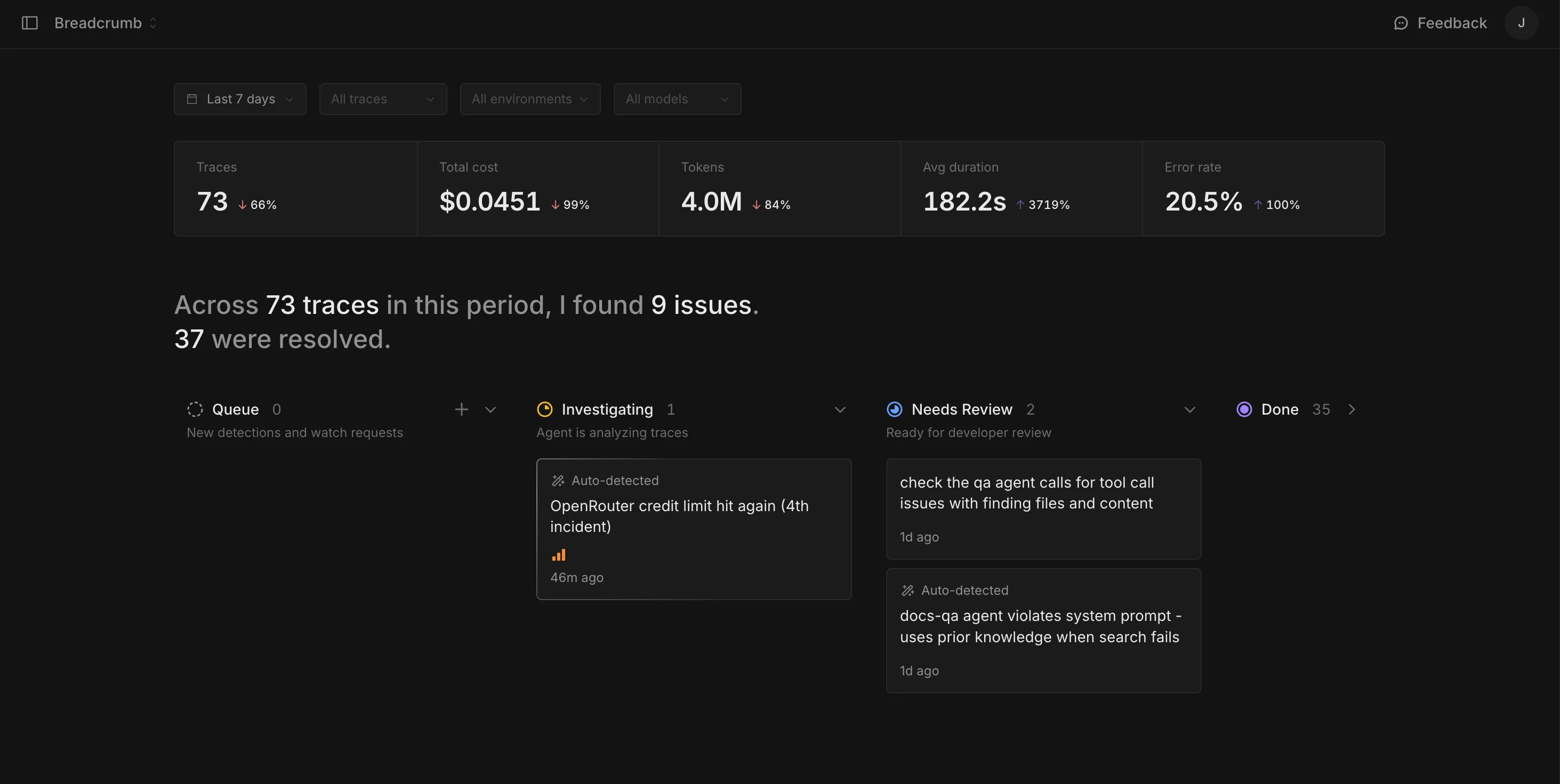

Issues found before your users find them.

A monitoring agent that reads every trace, learns your project, and surfaces what matters.

Search agent returning confident answers from empty context window

Retrieval agent skipping 40% of available documents in summarize workflow

Cost spike: generateText calls doubled token usage after prompt template change

Other tools log your traces. Breadcrumb understands them.

Every other tracing tool expects you to find the problems yourself. Breadcrumb's agent reads every trace, builds context over time, and gets smarter about what matters in your project.

Three lines of code. Never miss an issue.

Works with Vercel AI SDK out of the box. Import, initialize, pass telemetry, stay informed.

import { init } from "@breadcrumb-sdk/core";

import { initAiSdk } from "@breadcrumb-sdk/ai-sdk";

const bc = init({ apiKey, baseUrl });

const { telemetry } = initAiSdk(bc);

const { text } = await generateText({

// ...

experimental_telemetry: telemetry("summarize"),

});Open source. Self-hosted. Your data.

Deploy on Railway, Fly, or your own servers. Fork it, extend it, run it however you want. No usage fees, no vendor lock-in.